Most enterprises now have governance frameworks for financial decisions, data privacy, and cybersecurity. Yet when it comes to AI, arguably the most transformative and risk-laden technology they will ever deploy, many organizations still operate without any formal governance structure whatsoever.

The consequences are predictable. AI tools proliferate without oversight. Risk assessments are skipped in favor of speed. Accountability is murky when something goes wrong. Compliance obligations are missed. And the organization ends up reactively managing AI incidents after they happen rather than preventing them.

An AI Governance Committee changes that. When structured correctly, it creates a cross-functional body with the authority, expertise, and mandate to ensure AI is deployed responsibly, securely, and in alignment with the organization’s values and obligations. It doesn’t slow AI down, it makes AI sustainable.

This guide covers everything you need to build one: who should be on it, what it should own, how it should operate, and the best practices that separate committees that drive real change from those that exist only on paper.

What Is an AI Governance Committee?

An AI Governance Committee (sometimes called an AI Ethics Board, AI Risk Council, or AI Steering Committee) is a cross-functional body responsible for overseeing the development, procurement, deployment, and ongoing management of artificial intelligence systems within an organization.

Unlike a single team or department, a governance committee brings together stakeholders from across the business technology, security, legal, compliance, ethics, operations, and executive leadership to ensure that AI decisions are made with full visibility into their implications.

The committee is not an approval bottleneck. Its goal is to create clear, consistent processes that allow the organization to move confidently on AI initiatives knowing that risk has been assessed, accountability has been assigned, and the right controls are in place.

Why You Need One Now

The case for an AI Governance Committee has never been stronger or more urgent. Several forces are converging to make formal AI governance a business necessity rather than an optional best practice.

Regulatory Pressure Is Intensifying

The EU AI Act, NIST AI Risk Management Framework, ISO 42001, and a growing body of sector-specific regulations are establishing formal requirements for AI oversight, documentation, and accountability. Many of these frameworks explicitly require organizations to demonstrate that governance structures are in place. A committee provides the organizational foundation for compliance.

AI Risk Is Increasingly Material

Boards and investors are beginning to ask hard questions about AI risk. SEC guidance has signaled that material AI-related risks may require disclosure. As AI becomes more deeply embedded in business operations, the governance structures around it become directly relevant to enterprise risk management and to the confidence that investors, partners, and customers place in the organization.

AI Incidents Are Becoming More Frequent and More Costly

From biased hiring algorithms to AI-generated misinformation to data breaches caused by insecure AI deployments, AI incidents are happening more frequently and at greater scale. Organizations with governance structures in place are better positioned to prevent, detect, and respond to these incidents and to demonstrate due diligence when regulators or courts inquire.

Core Roles and Responsibilities

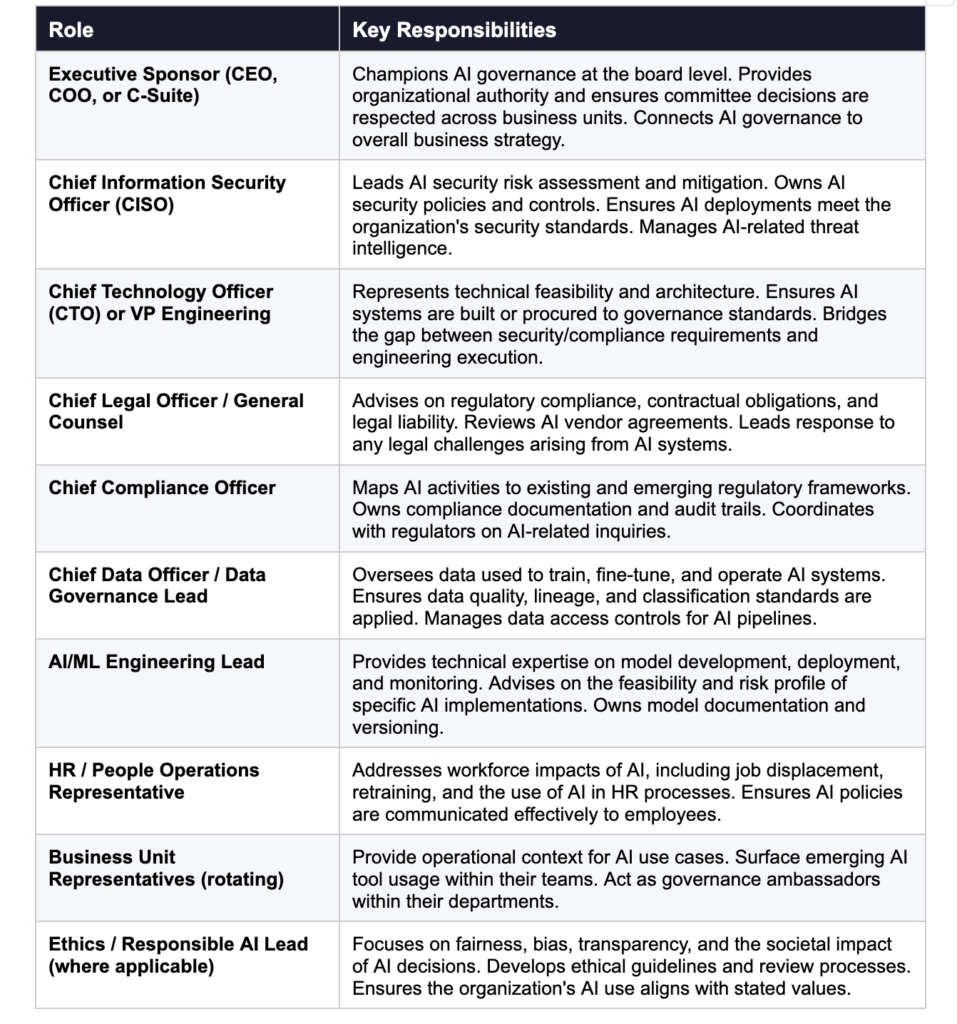

The composition of your AI Governance Committee should reflect the breadth of AI’s impact across your organization. Here are the core roles that every committee should include, along with what each brings to the table.

What the Committee Should Own

Clarity of mandate is one of the most important determinants of a governance committee’s effectiveness. Without a clear scope, committees either become rubber stamps that approve everything or bottlenecks that block everything. Here is what a well-structured AI Governance Committee should own:

AI Inventory and Classification

The committee should maintain a comprehensive, up-to-date inventory of all AI systems in use or under development across the organization. Each system should be classified by risk level using a framework such as the EU AI Act’s risk tiers or the NIST AI RMF to determine the appropriate level of oversight and control required.

AI Procurement and Approval

Any new AI tool, platform, or model whether built internally or procured from a vendor should go through a defined review process before deployment. The committee should establish clear criteria for approval, including security posture, data handling practices, regulatory compliance, ethical considerations, and alignment with business objectives. High-risk AI systems should require full committee review; lower-risk tools can move through a lighter-weight process.

AI Policy Development and Enforcement

The committee owns the organization’s AI usage policies. This includes acceptable use policies for employees, data handling requirements for AI systems, vendor and third-party AI standards, and policies governing the use of AI in high-stakes decisions such as hiring, lending, or medical diagnosis. Policies should be reviewed and updated at least annually or whenever significant regulatory or technological changes occur.

Risk Assessment and Monitoring

The committee should establish a repeatable risk assessment process for AI systems, covering security vulnerabilities, bias and fairness issues, privacy risks, and operational reliability. Approved AI systems should be subject to ongoing monitoring, with regular reporting to the committee on key risk metrics, incidents, and near-misses.

Incident Response and Accountability

When an AI system fails whether due to a security breach, a biased output, a regulatory violation, or an operational error the committee should ensure that a response process is activated, root cause analysis is conducted, and accountability is assigned. The committee should also review post-incident findings to determine whether governance processes need to be strengthened.

Regulatory Tracking and Compliance Reporting

The AI regulatory landscape is evolving rapidly. The committee should maintain active awareness of relevant regulations and standards including the EU AI Act, NIST AI RMF, ISO 42001, and sector-specific requirements and ensure the organization’s AI governance practices remain compliant. This includes maintaining documentation and audit trails sufficient to demonstrate compliance to regulators.

How the Committee Should Operate

Structure without process produces committees that meet but don’t govern. Here’s how to design an operating model that creates real accountability.

Meeting Cadence

Most AI Governance Committees benefit from a tiered meeting structure. A full committee meeting should be held monthly or quarterly to review the AI portfolio, assess emerging risks, make high-level policy decisions, and receive reports from working groups. Smaller working groups focused on specific areas like security, compliance, or ethics can meet more frequently to do detailed work and bring recommendations to the full committee.

Decision Rights and Escalation Paths

The committee should have a documented decision rights framework that clarifies which decisions can be made by working groups, which require full committee approval, and which need to be escalated to the board or executive leadership. This prevents both under-governance (decisions made without proper review) and over-governance (every decision requiring full committee sign-off).

Documentation and Audit Trails

Every significant decision made by the committee approvals, denials, risk acceptances, policy changes should be documented. This documentation serves multiple purposes: it creates an audit trail for regulators, it provides context for future decisions, and it holds the committee accountable to its own processes. Meeting minutes, risk assessment records, and approval documentation should be retained according to the organization’s document retention policy.

Reporting to the Board

The AI Governance Committee should report regularly to the board of directors at minimum annually, and more frequently if significant risks or incidents arise. Board reporting should cover the organization’s AI risk posture, key governance metrics, significant incidents and remediation status, regulatory developments and compliance status, and emerging risks on the horizon.

Best Practices for an Effective AI Governance Committee

Drawing on experience with enterprise governance structures and the specific demands of AI oversight, here are the best practices that distinguish committees that drive real change from those that exist only on paper.

- Start with scope, not structure. Before you define roles and meeting schedules, define what the committee is responsible for. A committee with a clear mandate is far more effective than one that spends its early meetings debating what it should be doing.

- Secure executive sponsorship from day one. An AI Governance Committee without executive backing will struggle to enforce its decisions. Make sure your executive sponsor has real authority and is visibly committed to the committee’s mandate.

- Design for speed as well as rigor. A governance process that takes three months will be bypassed. Design your review process to match risk level fast-track for low-risk tools, full review for high-risk systems. Speed and rigor are not mutually exclusive.

- Build the AI inventory before anything else. You cannot govern what you cannot see. One of the committee’s first orders of business should be a comprehensive audit of all AI tools and systems currently in use across the organization including Shadow AI.

- Adopt a recognized framework. Rather than building your governance framework from scratch, adopt and adapt an established framework such as the NIST AI RMF or ISO 42001. This gives you a credible foundation and makes compliance demonstration significantly easier.

- Rotate business unit representatives. Including rotating representatives from different business units keeps the committee grounded in operational reality and builds governance awareness across the organization. It also prevents the committee from becoming an insular group disconnected from how AI is actually being used.

- Invest in AI literacy for all committee members. Governance decisions are only as good as the understanding behind them. Ensure all committee members including legal, HR, and compliance representatives have sufficient AI literacy to participate meaningfully in risk discussions.

- Make the process visible to employees. Employees should know that an AI governance process exists, how to submit new tools for review, and where to go with concerns. Transparency builds trust and reduces the incentive to bypass governance channels.

- Review and iterate. AI is evolving faster than any governance framework can anticipate. Build regular retrospectives into your committee calendar at least annually to assess what’s working, what’s not, and how the framework needs to evolve.

Common Pitfalls to Avoid

Even well-intentioned governance committees can fall into patterns that undermine their effectiveness. Here are the most common pitfalls and how to avoid them.

- Treating governance as a compliance checkbox. Governance that exists only to satisfy auditors won’t protect your organization. Build processes that create genuine oversight, not just documentation.

- Over-indexing on technology, under-indexing on people. AI governance tools and platforms are valuable, but they don’t replace the human judgment that effective governance requires. Invest in people and process first.

- Creating a committee without authority. A committee that can only recommend but not enforce is limited in its effectiveness. Ensure the committee has real authority to approve, deny, and require remediation.

- Ignoring low-risk AI. Not every AI tool needs a full governance review but every tool needs some level of awareness and tracking. Don’t let low-risk tools fall entirely outside your governance perimeter.

- Failing to communicate decisions. If employees don’t know what the committee has decided and why, they can’t comply. Build communication channels that keep the organization informed of governance decisions and policy updates.

The Bottom Line

An AI Governance Committee is not a bureaucratic formality. It’s the organizational infrastructure that makes responsible, sustainable AI adoption possible. With the right people, a clear mandate, an effective operating model, and a commitment to genuine oversight rather than checkbox compliance, it becomes one of the most valuable bodies in your enterprise.

The organizations building these structures today are the ones that will be able to move confidently on AI tomorrow because they’ll have the governance foundation that makes bold AI adoption safe.

Ready to build your AI governance framework?

AccuroAI’s AI Security & Governance Platform gives your committee the visibility, risk assessment tools, and compliance documentation capabilities it needs to govern AI effectively at the speed your business demands.