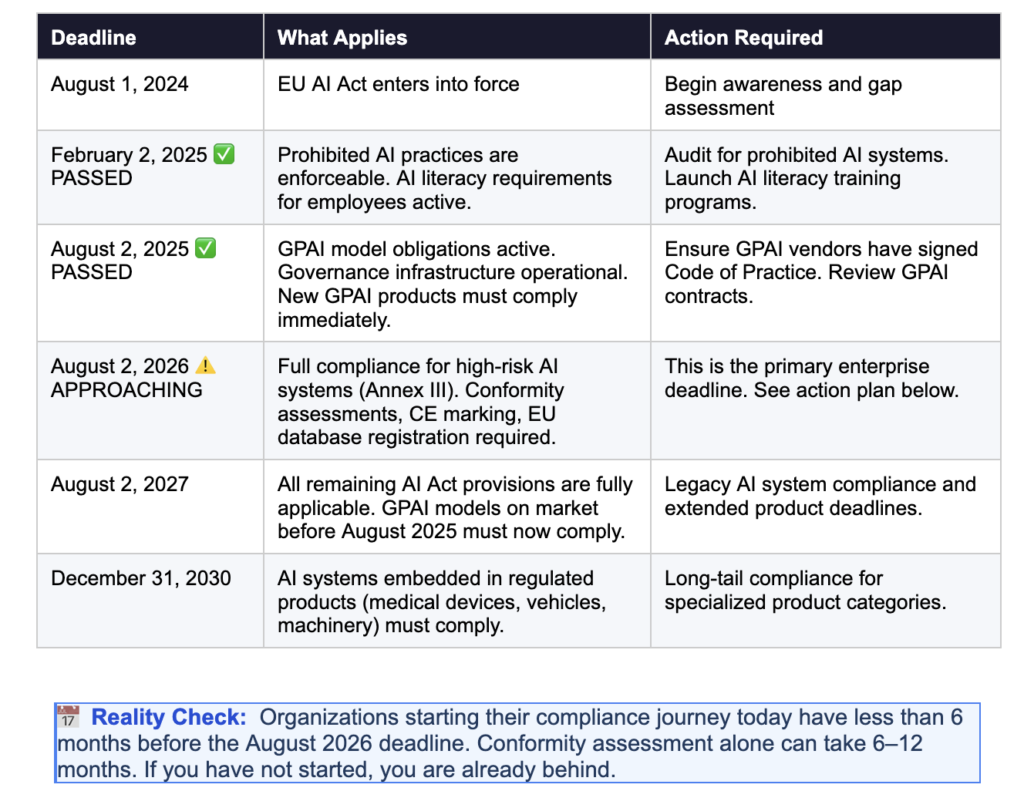

EU AI Act compliance is no longer something enterprises can plan for later. It is happening right now. The world’s first comprehensive legal framework governing artificial intelligence Regulation (EU) 2024/1689 entered into force in August 2024, and its enforcement timeline is moving fast. Prohibited AI practices have been banned since February 2025. General-Purpose AI obligations activated in August 2025. And the most consequential deadline for most enterprises full compliance for high-risk AI systems arrives on August 2, 2026.

The penalties for non-compliance are severe: fines of up to €35 million or 7% of global annual turnover, whichever is higher. National AI authorities across EU member states are now fully operational and actively monitoring implementation. Finland became the first active national enforcer in January 2026. Others are following.

And yet, the readiness data is sobering. Analysis of enterprise compliance posture suggests that over half of organizations currently lack a systematic inventory of AI systems in production the most basic prerequisite for compliance. Many are applying standard software procurement practices to AI without recognizing the new regulatory requirements. Most have significant documentation gaps that would not survive a regulatory audit.

This guide is designed to change that. Whether you are starting your compliance journey or accelerating an existing program, what follows is everything your enterprise needs to understand about the EU AI Act: the risk tiers, the deadlines, what compliance actually requires, what non-compliance costs, and the exact steps to take before the clock runs out.

What Is the EU AI Act and Why Does It Matter?

The EU AI Act (Regulation EU 2024/1689) is the world’s first binding, comprehensive legal framework for artificial intelligence. Unlike voluntary guidelines or ethical codes, it carries the full force of EU law applicable across all 27 member states, enforceable by national regulators, and backed by significant financial penalties.

The Act takes a risk-based approach: the more risk an AI system poses, the more stringent the compliance obligations. It applies not just to AI developers but to anyone who deploys, imports, distributes, or uses AI systems in a professional context meaning nearly every enterprise that uses AI tools in their operations is in scope.

Critically, the Act has extraterritorial reach similar to GDPR. If your AI system affects individuals in the EU even if your company is headquartered outside of Europe you are subject to its requirements. This makes EU AI Act compliance a global enterprise concern, not just a European one.

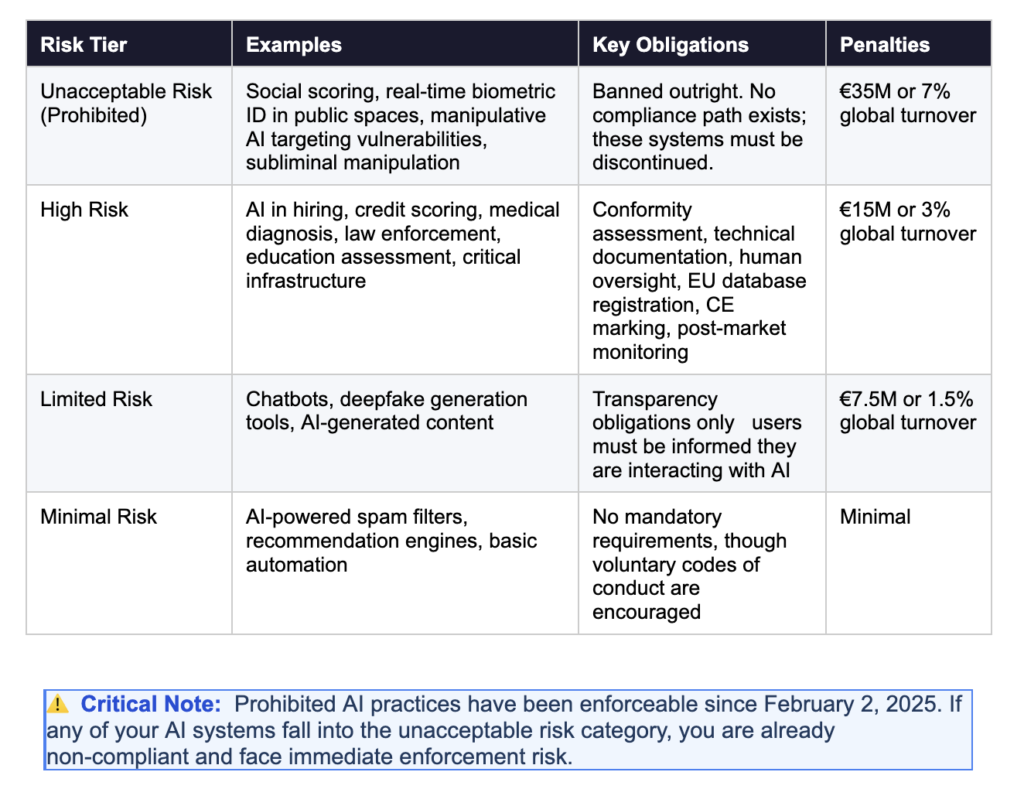

The Four Risk Tiers: Where Does Your AI Sit?

The EU AI Act classifies all AI systems into four risk tiers. Your tier determines your compliance obligations, your documentation requirements, and your penalty exposure. Getting this classification right is the single most important step in your compliance journey.

What Qualifies as High Risk?

The high-risk category is where most enterprises will face their most significant compliance burden. Annex III of the Act defines the specific use cases that qualify as high risk. These include, but are not limited to:

- AI used in recruitment, screening candidates, or evaluating performance in employment contexts

- AI used to evaluate creditworthiness or determine insurance pricing

- AI used in medical devices or for medical diagnosis

- AI used in educational or vocational assessment

- AI used by law enforcement for risk assessment of individuals

- AI used in critical infrastructure management (energy, water, transport)

The key principle is that risk classification is based on intended use and context, not on the underlying technology. The same large language model could be minimal risk when used for internal drafting assistance and high risk when used to screen job applicants. Every AI system requires individual assessment.

The Complete EU AI Act Compliance Timeline

Understanding where you are in the EU AI Act’s implementation schedule is critical for prioritizing your compliance investments. Here is the complete timeline:

Who Is Subject to the EU AI Act? Understanding Your Role

Your obligations under the EU AI Act depend significantly on your role in the AI supply chain. The Act uses specific terminology to distinguish between different actors and the compliance requirements vary substantially based on which category you fall into.

Providers

Providers develop AI systems and place them on the EU market under their own name or trademark. If your company builds AI products whether for sale, as part of a service offering, or for internal deployment you are likely a provider for those systems. Providers carry the heaviest compliance obligations, including conformity assessments, technical documentation, CE marking, and post-market monitoring.

Deployers

Deployers use AI systems in a professional context meaning any enterprise that uses AI tools to make or inform decisions about customers, employees, or other stakeholders. Deployers have their own set of obligations, including conducting fundamental rights impact assessments for high-risk systems, ensuring appropriate human oversight, and maintaining usage logs. If you are procuring AI tools from vendors, you are a deployer of those tools.

Importers and Distributors

Importers bring AI systems developed outside the EU into the European market. Distributors make AI systems available without substantially modifying them. Both carry specific obligations to verify provider compliance before placing systems on the market.

💡 Key Insight: Most large enterprises will be both providers (for internally built AI tools) and deployers (for third-party AI tools they purchase). This means the compliance obligations of both roles apply simultaneously a fact many organizations are unprepared for.

What High-Risk AI Compliance Actually Requires

For enterprises with high-risk AI systems which includes the majority of AI used in HR, finance, healthcare, and customer-facing decisions, the August 2026 deadline requires a comprehensive set of technical and governance measures. Here is what compliance actually looks like in practice.

1. Risk Management System

Every high-risk AI system must have a documented risk management system that covers the entire lifecycle from design through decommissioning. This system must identify and analyze known and foreseeable risks, estimate and evaluate risks that may emerge during use, adopt appropriate risk mitigation measures, and test residual risk levels. The risk management system is a living document; it must be updated whenever the system is modified or new risks are identified.

2. Data Governance and Training Data Requirements

High-risk AI systems must use training, validation, and testing data that meets specific quality standards. Data governance practices must cover the design choices for data collection, data preparation operations (labeling, cleaning, enrichment), formulation of assumptions, and examination for possible biases. Organizations must be able to demonstrate that training data is relevant, representative, and free from errors and must maintain records to prove it. This is one of the most underestimated compliance burdens, particularly for organizations that have adopted agile development practices with minimal documentation.

3. Technical Documentation (Annex IV)

Annex IV of the EU AI Act specifies the technical documentation that must be prepared before a high-risk AI system is placed on the market. This is comprehensive and includes a general description of the AI system and its intended purpose, design specifications and system architecture, data requirements and training methodology, measures taken to achieve accuracy and robustness, a description of human oversight mechanisms, and performance metrics and testing results. For organizations that have not maintained this level of documentation historically, producing it retrospectively is a significant undertaking and time is running short.

4. Transparency and User Information

High-risk AI systems must come with clear instructions for use that allow deployers to understand the system’s capabilities and limitations, the populations on which it has been tested, and the conditions under which it can be relied upon. Users who interact directly with AI systems must be informed that they are dealing with AI. Where AI makes decisions that significantly affect individuals, those individuals have the right to explanations.

5. Human Oversight Mechanisms

High-risk AI systems must be designed to enable effective human oversight. This means building interfaces and processes that allow humans to understand and monitor AI outputs, intervene or override AI decisions, and stop the system if necessary. Oversight must be more than theoretical; it must be operationally real. Organizations must train the personnel responsible for oversight and document how oversight is implemented and exercised.

6. Accuracy, Robustness, and Cybersecurity

High-risk AI systems must achieve appropriate levels of accuracy, robustness, and cybersecurity. The Act requires testing against reasonably foreseeable misuse, adversarial attacks, and technical errors. For security leaders, this is directly relevant: cybersecurity controls for AI systems are now a regulatory requirement, not just a best practice. This includes resilience against prompt injection, data poisoning, and model extraction attacks.

7. Conformity Assessment and CE Marking

Before a high-risk AI system can be deployed in the EU, it must undergo a conformity assessment to verify that it meets all applicable requirements. For some categories, this can be a self-assessment by the provider. For others particularly AI systems used as safety components in regulated products third-party assessment by a notified body is required. Upon successful completion, the provider must affix CE marking and register the system in the EU AI database. Given that conformity assessment can take six to twelve months, organizations that have not started this process are in a precarious position relative to the August 2026 deadline.

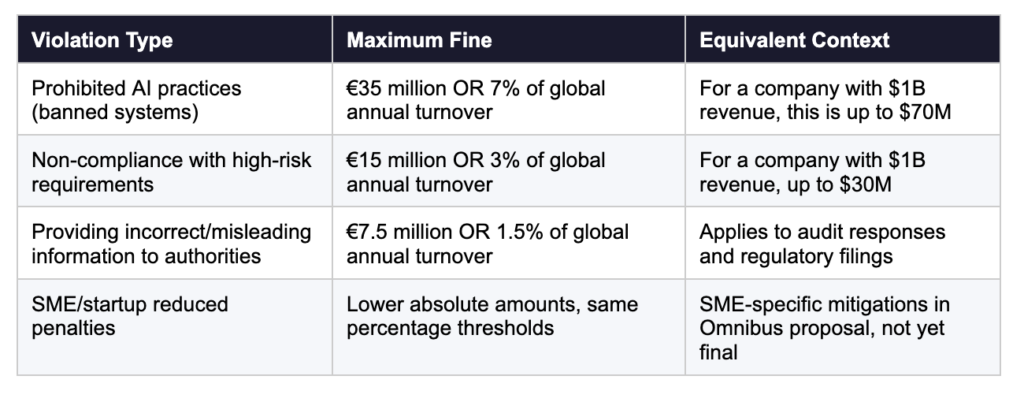

The Penalty Structure: What Non-Compliance Actually Costs

The EU AI Act establishes one of the most aggressive penalty structures of any technology regulation in history exceeding even GDPR in some categories. Understanding the financial exposure is essential for building the business case for compliance investment.

Beyond financial penalties, enforcement actions can include orders to recall or withdraw AI systems from the market, public disclosure of violations, and operational restrictions that could impact business continuity. Italy’s national AI law (Law No. 132/2025), which entered into force in October 2025, also introduced criminal penalties for certain AI violations including imprisonment of one to five years for unlawful dissemination of AI-generated content such as deepfakes.

Where Most Enterprises Are Falling Short

Analysis of enterprise readiness reveals consistent patterns in where organizations are most unprepared for EU AI Act compliance. Understanding these gaps is the first step toward closing them.

- No AI inventory: Over half of enterprises lack a systematic inventory of AI systems in production or development. Without knowing what AI exists, risk classification and compliance planning is impossible.

- Treating AI as traditional software: Many organizations apply standard software development and procurement practices to AI without recognizing the unique regulatory requirements. Standard vendor security questionnaires are not sufficient for AI Act compliance due diligence.

- Missing design history and data documentation: The technical documentation required by Annex IV demands comprehensive records of design decisions, data lineage, and testing methodologies. Organizations with agile development practices and minimal documentation will struggle to produce this retrospectively.

- Inadequate data governance: High-risk AI compliance requires documented data governance that goes far beyond what most organizations currently maintain particularly around bias testing and data quality validation.

- No cross-functional governance structure: AI compliance is not a legal problem or an IT problem; it requires legal, security, compliance, data, and business stakeholders working together. Organizations without cross-functional AI governance are structurally unprepared.

- Ignoring vendor obligations: Deployers are responsible for verifying that the AI tools they procure from vendors meet EU AI Act standards. Many organizations have not begun this vendor audit process.

Your EU AI Act Compliance Action Plan: 8 Steps to August 2026

Given the proximity of the August 2026 deadline, every week of inaction increases risk. Here is a structured action plan that enterprises can use to drive their compliance program forward in order of priority.

Step 1: Build Your AI Inventory (Weeks 1–3)

Before anything else, you must know what AI systems your organization uses, builds, or procures. Conduct an organization-wide audit that covers all AI tools in use across all departments (not just IT), AI components embedded in existing software and workflows, AI capabilities in SaaS tools that may not be labeled as ‘AI,’ and AI systems under development by internal engineering teams. This inventory is the foundation for everything that follows. Without it, you cannot classify risk, prioritize remediation, or demonstrate compliance to regulators.

Step 2: Classify Each AI System by Risk Tier (Weeks 3–6)

Once your inventory is complete, classify each system using the EU AI Act’s four-tier framework. For each system, document the intended use case, the population it affects, the decisions it informs or makes, and the potential for harm if it fails or is misused. Where classification is ambiguous, document your reasoning, regulators expect organizations to demonstrate that they made a good-faith effort to classify correctly, even when the classification is not perfectly clear-cut.

Step 3: Conduct a Compliance Gap Analysis (Weeks 4–8)

For each high-risk AI system, compare your current state against the full requirements of the Act: risk management system, data governance, technical documentation, transparency, human oversight, accuracy and cybersecurity controls, and conformity assessment readiness. Use this gap analysis to generate a prioritized remediation roadmap. High-risk systems with the largest gaps should be addressed first.

Step 4: Establish Cross-Functional AI Governance (Weeks 2–6)

EU AI Act compliance is not a project for one team to own. Establish a cross-functional AI Governance Committee that brings together legal, compliance, security, data, and technology leadership. This committee should own the compliance roadmap, approve risk classifications, review technical documentation, oversee the conformity assessment process, and report progress to the board. Without governance infrastructure, compliance efforts will be fragmented and incomplete.

Step 5: Remediate High-Priority Gaps (Months 2–8)

Execute your remediation roadmap, prioritizing high-risk systems with the most significant compliance gaps. Key remediation activities will typically include developing or updating risk management systems and documentation, implementing or strengthening data governance for AI training data, building or formalizing human oversight mechanisms and processes, strengthening cybersecurity controls specifically for AI attack vectors, and updating technical documentation to meet Annex IV requirements.

Step 6: Audit Your AI Vendors (Months 2–5)

As a deployer, you are responsible for verifying that the AI tools you procure meet EU AI Act requirements. Contact your AI vendors and request their EU AI Act compliance documentation. For high-risk systems, this should include their conformity assessment results, CE marking, and EU database registration. Update your vendor contracts to include EU AI Act compliance warranties and audit rights. Where vendors cannot provide satisfactory documentation, develop exit or replacement strategies.

Step 7: Initiate Conformity Assessment (Months 3–6)

For high-risk AI systems where you are the provider, initiate conformity assessment as early as possible. Self-assessment is permitted for many Annex III systems but it requires comprehensive documentation that must be ready before assessment begins. For systems requiring third-party assessment by a notified body, engage early: demand for notified body services will increase significantly as the August 2026 deadline approaches, creating capacity constraints.

Step 8: Implement Ongoing Monitoring and Employee Training (Months 4–8)

EU AI Act compliance is not a one-time project it is an ongoing obligation. Establish processes for monitoring AI systems post-deployment, tracking regulatory updates from the EU AI Office and national authorities, maintaining and updating technical documentation as systems evolve, and conducting regular internal audits of compliance status. Compliance also requires employee AI literacy, a requirement that has been in force since February 2025. Training programs should cover what AI systems the organization uses, the risks associated with those systems, employees’ obligations when using or overseeing AI, and how to report concerns or incidents.

EU AI Act Compliance Checklist for Enterprises

Use this checklist to quickly assess your organization’s current compliance status. A ‘No’ on any of these items represents an active gap that requires remediation before August 2026.

Foundational Requirements

- Complete AI inventory exists and is maintained across all business units

- Each AI system has been classified by risk tier with documented rationale

- Cross-functional AI Governance Committee is established and operational

- Prohibited AI practices audit has been completed; no banned systems are in use

- Employee AI literacy training program is in place and documented

High-Risk AI System Requirements

- Risk management system documented for each high-risk AI system

- Data governance documentation meets Annex IV standards

- Technical documentation (Annex IV) drafted and reviewed

- Human oversight mechanisms designed, implemented, and operationally tested

- Cybersecurity controls for AI-specific attack vectors in place

- Conformity assessment process initiated or completed

- EU database registration completed (where required)

Vendor and Third-Party Requirements

- All AI vendors have been contacted and their compliance documentation reviewed

- Vendor contracts updated to include EU AI Act compliance warranties

- Exit or replacement strategy exists for any non-compliant vendor AI systems

General-Purpose AI: What Enterprises Using LLMs Need to Know

General-Purpose AI (GPAI) models including large language models like GPT-4, Claude, and Gemini are subject to a specific regulatory regime that has been active since August 2, 2025. If your enterprise uses GPAI models through APIs or embedded in products, here is what you need to know.

Providers of GPAI models must publish technical documentation, maintain model cards, comply with EU copyright law, implement cybersecurity measures, and disclose training data summaries. 26 major AI providers including Microsoft, Google, Amazon, OpenAI, and Anthropic signed the GPAI Code of Practice in August 2025. Meta refused and now faces enhanced regulatory scrutiny. When selecting GPAI vendors, enterprises should verify that their vendor has signed the Code of Practice or can otherwise demonstrate compliance.

GPAI models with ‘systemic risk’ defined as those trained using computing power exceeding 10²⁵ FLOPs face additional obligations including adversarial testing (red teaming), incident reporting to the EU AI Office, and enhanced cybersecurity requirements. If your organization is deploying frontier models in critical business processes, ensure your vendor is meeting these enhanced obligations.

The Bottom Line: Time Is the Scarcest Resource in EU AI Act Compliance

The EU AI Act represents the most significant regulatory intervention in the history of enterprise technology, more comprehensive than GDPR, with higher penalties, broader scope, and more complex technical requirements. The August 2026 deadline for high-risk AI systems is not a distant target. For organizations that have not yet built their AI inventory, established governance infrastructure, or begun conformity assessment, it is alarmingly close.

The enterprises that will navigate this landscape successfully are not necessarily those with the most resources. They are those that start now, move systematically, and treat EU AI Act compliance as the strategic governance imperative; it is not a compliance checkbox, not a legal department project, but an organization-wide transformation in how AI is managed, monitored, and held accountable.

Every month of delay increases remediation costs, reduces your window for conformity assessment, and grows your exposure to enforcement. The time to act is not when the deadline is six weeks away. The time to act is now.

Is your enterprise ready for the EU AI Act?

AccuroAI’s AI Security & Governance Platform gives your team the AI inventory management, risk classification, compliance documentation, and ongoing monitoring capabilities you need to meet August 2026 with confidence not scrambling.